If you’ve been on LinkedIn recently, you’ve probably seen your feed littered with polling questions.

It could be something simple as, “which of these items do you like for breakfast” or something more specific such as, “Zero Trust is good because…”

Either way, I have a bit of an issue with how these are framed, run, and subsequently interpreted.

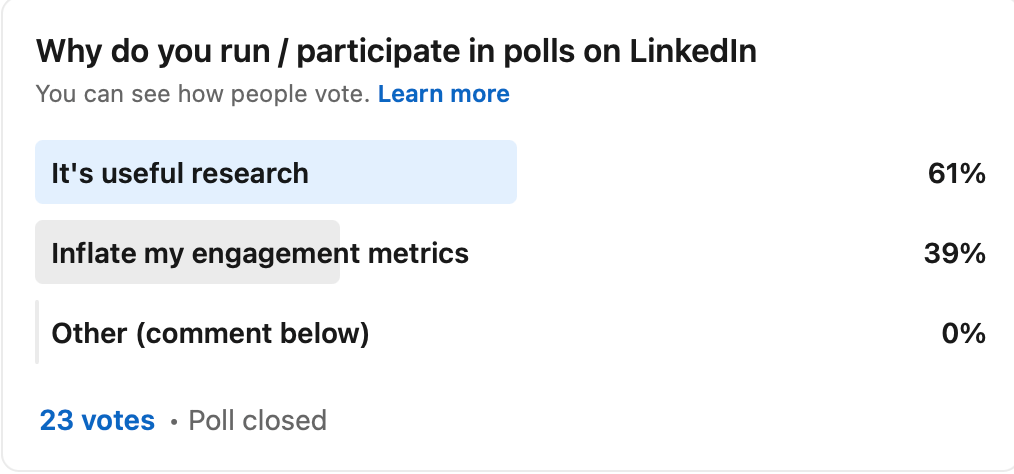

I ran a poll yesterday for this very purpose and as you can see below, the results were shocking. Almost 40 percent of respondents stated that they only do these polls to inflate their engagement metrics! Shock horror – it’s a sham! But also precisely the kind of headline you can see in a security article or blog.

When you take a closer look at the poll I ran, the options are framed horribly, there aren’t enough options, and only 23 people voted.

“Nearly two thirds of professionals say LinkedIn Polls are useful research” is another headline I could write from this poll.

But you see how farcical this all is. I don’t believe any of these polls provide any meaningful data that can be used. Of course if you’re looking for confirmation (especially if you’re biased) then asking a poll of your connections who are either colleagues or friends, or people who like the content you follow, then they will tend to validate what you want them to.

I’m obviously using this poor little poll as an example, but we have a much larger problem in the security industry where meaningless polls are touted as research.

If you look at various security news outlets, you’ll see headlines similar to these:

- 76% of gamers were financially affected by a cyberattack, losing $700+ on average

- Homeworkers increase your risk by 87%

- 66% of data centres don’t know how many firewalls they have

And the only thing I can do is shake my head. How many people were asked the question? How were the respondents selected? How was the data analysed? How were the questions framed? Were biases and limitations stated? The list goes on.

Once you unpack some of those questions, the sensationalist nature of the headlines begin to crumble away.

Now, don’t get me wrong. I don’t believe that many of these polls or surveys are done in bad faith. But without careful consideration, incorrect data can be collected, or it can be misinterpreted.

I’m not advocating that every single survey needs to be conducted to an academic standard with peer reviews and defence – I’m not that weird. What I do think is that when the results are published, they aren’t buried under a sensationalist headline. Yes, I know marketing departments will want to get some coverage. But it’s not always that simple.

Share the sample size, how many people responded, a survey of 2500 people is probably more reliable than my poll of 23. Think about the questions, what will you do with the answers? Do you have a bias, are the questions leading the respondent down a particular path?

One of the reasons I like the Verizon Data Breach Incident Report isn’t just for the pretty graphs, but for the fact that the analysis is quite open about the limitations and ambiguities of the data.

Here’s something you can try yourself. Go and ask 10 friends the question:

With regards to your salary you feel you

- Are content with what you have

- Should be making more

- Are making more money than you should

How do you think they would respond? The subtle differences in how the questions are worded are nudging the respondent in a particular direction. So that at the end, I can run the headline, “Majority of professionals are underpaid”, 6 out of 10 think they should be making more.

Bear that in mind the next time someone quotes a figure about how big a particular problem is and ask yourself whether the study being quoted is actually helpful, objective, transparent, and trustworthy.

If not, proceed with the required amount of cynicism.